Most voice AI vendor websites list dozens of potential use cases. Customer service, sales, scheduling, surveys, collections, onboarding. The implication is that voice agents can do all of it, everywhere, right now. The reality is more specific. Voice AI adoption thrives under certain conditions and struggles under others. Understanding the difference is more useful than a feature list.

The wedge pattern

Andreessen Horowitz observed a consistent adoption pattern in their 2025 voice agent update: enterprises almost never go from full human call-taking to full AI call-taking in one step. Instead, they start with a “wedge,” a single, well-defined call type that represents a small percentage of total volume [1]. If it works, they expand. If it doesn’t, the risk was contained.

This pattern matters because it reflects how real organisations actually adopt voice AI. Not as a wholesale replacement, but as a controlled experiment with measurable outcomes.

The most effective wedges share a few characteristics: high call volume, repetitive structure, clear success criteria, and existing budget for the calls being replaced [1]. When these conditions are met, voice agents tend to outperform expectations. When they’re missing, deployments stall.

Where it’s working

Financial services leads enterprise adoption, accounting for roughly 32.9% of the conversational AI market [2]. Banks represent about a quarter of global contact centre spend and more than $100 billion in annual BPO costs [1]. The use cases that work best are structured and compliance-friendly: account inquiries, balance checks, payment reminders, fraud alerts, and authentication. These calls follow predictable patterns, and the cost of handling them with human agents is well understood.

Healthcare is the fastest-growing segment, with voice AI adoption expanding at a 37.8% CAGR [3]. The strongest wedges here are administrative, not clinical: appointment scheduling, prescription refill requests, insurance verification, and billing inquiries. A mental health provider deployed a voice AI receptionist and saw new patient intakes increase by 60%, translating to $1.7 million in projected additional revenue, simply because calls that previously went unanswered were now being handled [4]. The common thread is not sophisticated medical reasoning, but reliable handling of high-volume administrative calls that consume front-desk capacity.

Recruiting and staffing is an unexpectedly strong early adopter. a16z reports that staffing agencies replacing first-round screening calls with AI interviews saw roughly 90% of AI-screened candidates advance to the first round, compared with about 50% under human screeners [1]. For agencies paid by candidate volume, this effectively doubled throughput while adding 24/7 scheduling and consistent scoring. The pain point is especially strong in high-volume staffing, where the screening call is a bottleneck, not a value-add.

Retail and e-commerce uses voice agents primarily for order tracking, returns processing, and FAQ handling during peak seasons. The call patterns are predictable, the knowledge base is structured, and the volume spikes are exactly what AI handles better than human teams that need weeks of seasonal hiring and training [5].

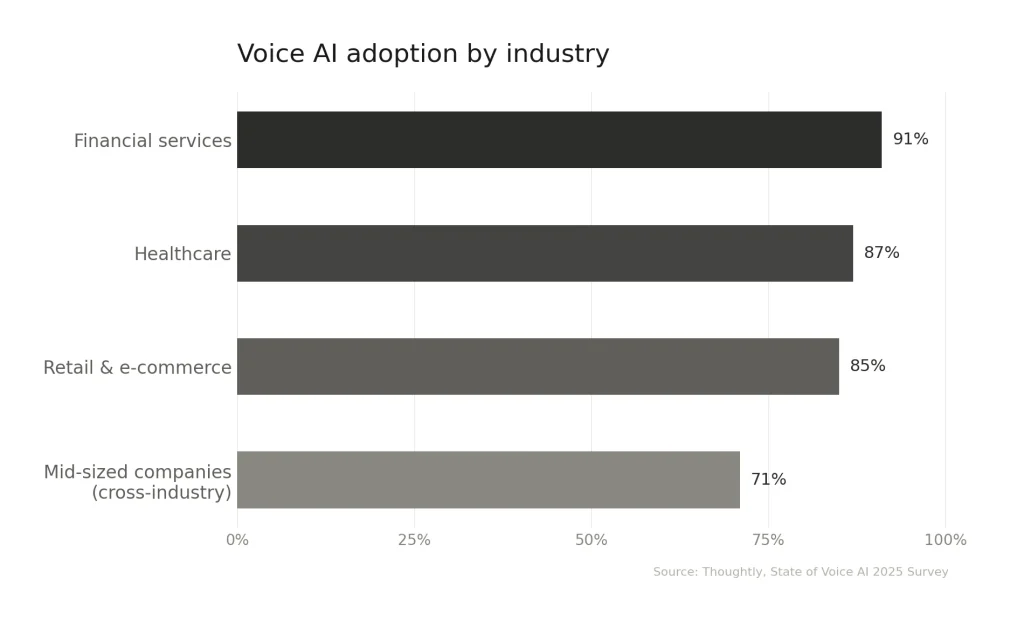

A 2025 industry survey by Thoughtly shows: adoption is highest in sectors with large call volumes and a structural need for 24/7 availability [5].

Where it struggles

Not every use case is ready for voice AI. The pattern of failure is as consistent as the pattern of success.

Complex, multi-step problem solving remains difficult. When a caller’s issue requires navigating ambiguity, pulling information from multiple systems, or exercising judgement, current voice agents hit their limits. They can handle “What’s my account balance?” but not “I was charged twice, and my refund was applied to the wrong account, and I also need to update my billing address.”

Emotionally sensitive interactions are a poor fit. Complaints, escalations, cancellations where the caller is frustrated, these situations require empathy, de-escalation, and the ability to deviate from a script. Voice AI can detect sentiment, but acting on it appropriately in real time is a different problem. Only 15% of businesses rely solely on voice AI for customer interactions; 85% use hybrid models that combine AI with human agents [5].

Low-volume, high-complexity calls don’t generate enough ROI to justify the implementation effort. If a business handles 20 specialised calls per day, automating them with a voice agent is unlikely to pay for itself. The economics work at scale with repetitive patterns.

Heavily regulated conversations where a single wrong answer carries legal consequences, such as financial advice, medical diagnosis, or legal counsel, remain largely human-operated. The regulatory surface is larger, and the cost of an AI error is disproportionate to the savings.

What determines success

Across industries, the deployments that work share a consistent profile. The call type is well-defined. The knowledge base is structured and maintained. The success metric is clear, whether that’s containment rate, cost per call, or resolution time. There’s a fallback path to a human agent. And the organisation treats the launch as the beginning of an optimisation process, not the end.

The deployments that fail tend to skip one or more of these conditions. Poorly defined scope, unstructured knowledge, no escalation path, or unrealistic expectations about what “AI-handled” means in practice.

What to take from this

Voice AI is not a universal solution. It’s a specific tool that works well under specific conditions. The companies seeing real results are the ones that start narrow, pick a use case where the economics and the call structure align, and expand based on evidence.

If the calls are high-volume, repetitive, and structured, voice AI will likely outperform human agents on cost, availability, and consistency. If they’re complex, emotional, or low-volume, a human is still the better answer. The skill is in knowing which calls belong in which category, and being honest about it.

Sources

[1] Andreessen Horowitz (a16z), “AI Voice Agents: 2025 Update.” Olivia Moore. January 2025. Link

[2] Synthflow, “Top 10 Enterprise AI Voice Agent Vendors for Contact Centers in 2025.” October 6, 2026. Link

[3] Grand View Research, “AI Voice Agents in Healthcare Market Report, 2030.” Link

[4] RingCentral, “Top voice AI agent use cases by industry.” January 30, 2026. Link

[5] Thoughtly, “The State of Voice AI in 2025: An Industry Report & Survey.” 2025. Link